A Swedish dub of my own video, in one afternoon

Blog post #38

A few weeks ago I shipped the first episode of AI Tool in 3 Min — an editorial-style YouTube explainer on ChatGPT, in English. Today I sat down with Claude Code and asked a simple question: could I rebuild the same video in Swedish, end to end, in an afternoon?

Turns out: yes.

By the time my coffee was cold I had a 3:41 cut sitting in my renders folder — same script, same brand, same overlays — but spoken in Swedish by a version of my own voice, with my own avatar lip-syncing along. I uploaded it to YouTube next to the English version. Same title, language tag set to Swedish.

This post is the build log.

What I started with

The English video already had all the expensive parts done:

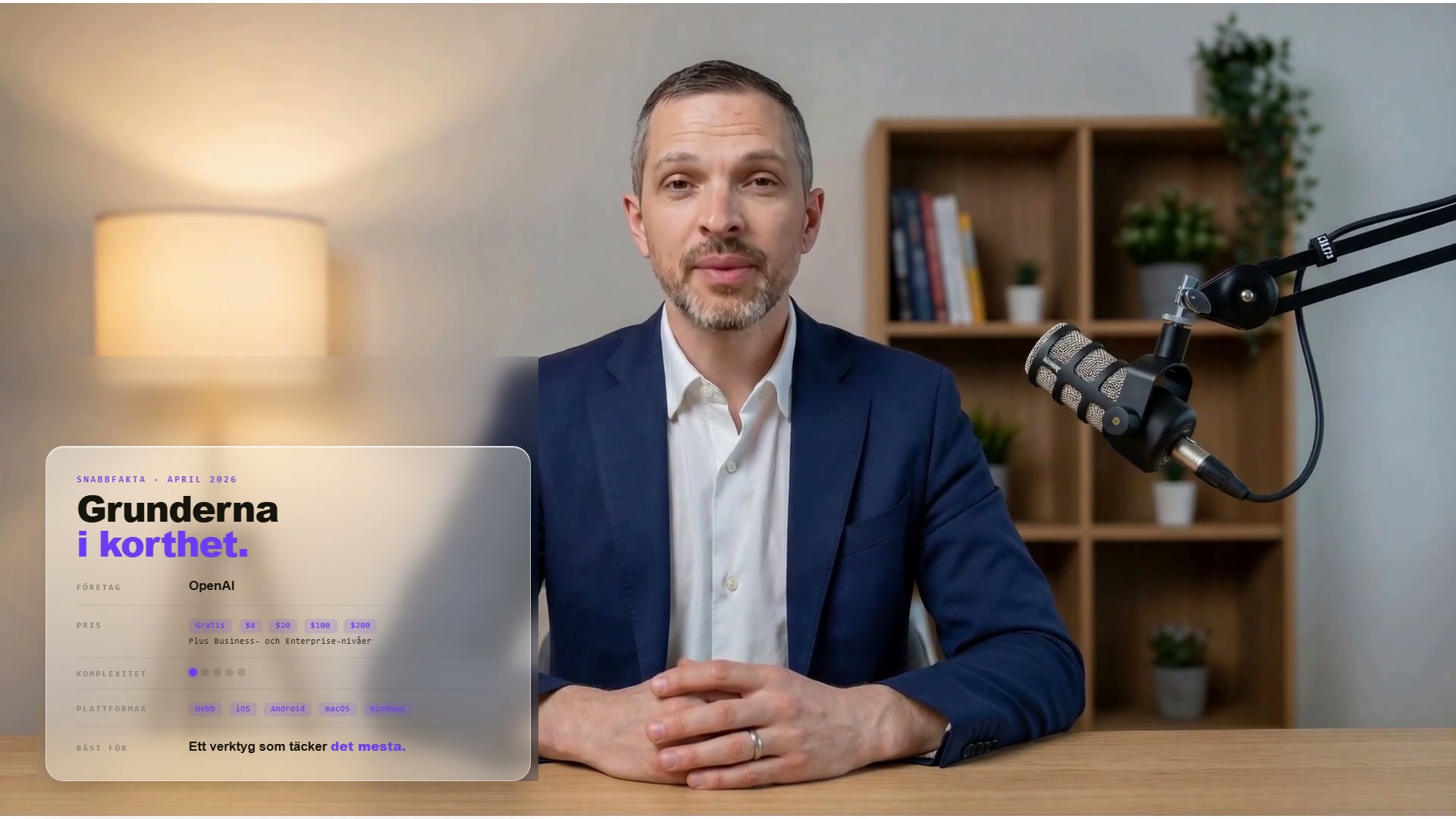

- A 3:33 HeyGen avatar source — me, in a navy shirt, sitting in the same room, reading the English script

- 13 overlay graphics from the English cut — quick-facts card, voice mode, Codex, math, study mode, Deep Research, and so on

- A working

ffmpegpipeline that composes the HeyGen MP4 with PNG overlays at planned timestamps - A glass-blur trick for the quick-facts card, where ffmpeg blurs the avatar pixels behind the card so it looks like frosted glass

So the question wasn’t can I build a video? It was: how much of this pipeline survives a language change?

Answer: most of it. The MP4 spine doesn’t, the audio doesn’t, and the overlay text doesn’t. Everything else does.

The four pieces I had to redo

1. Swedish VO. I’d built a “Stefan” voice in ElevenLabs months ago for an earlier project. I fed it the Swedish script — a translation of the English narration I’d already cleaned up to sound natural when spoken, not just translated. ElevenLabs handles Swedish acronyms like ChatGPT and PDF naturally now, which it didn’t a year ago. One pass, one mp3.

2. Swedish lip-sync. HeyGen took the Swedish mp3 and re-rendered the avatar with Swedish mouth shapes. Same outfit, same room, same posture — different language. This is the part that still feels like science fiction to me. A year ago this was a film crew. Now it’s a drag-and-drop and ten minutes of GPU time.

One concession here: I dropped down from HeyGen Avatar 5 (the latest, what I used for the English version) to Avatar 3. The cost-per-minute on V5 makes a single 3-minute language version expensive enough that a serious YouTube cadence stops being viable. V3 is meaningfully cheaper. The lip-sync is good enough — you can see the difference if you look for it, but a casual viewer probably won’t. This is the kind of tradeoff that doesn’t show up in a glossy demo and is impossible to avoid once you’re trying to ship more than one video.

3. Swedish overlays. The 13 PNGs in the English version had English text baked in — “RELEASED APRIL 2026”, “VENDOR · OpenAI”, “STUDY MODE”, and so on. I regenerated them in ChatGPT (image gen, in the web UI — my enterprise account has no API key, so this part is manual). Claude wrote me a shopping list of exact prompts for each. I generated, downloaded, dropped them into a folder named nyabilder-sv.

4. The quick-facts glass card. This one I kept as HTML — Inter + JetBrains Mono on a cream background, rendered through Chrome headless to a transparent PNG, then composited back with ffmpeg’s gaussian blur so it looks like the card sits on top of a frosted version of my own face. Translating it meant editing a few strings in the HTML file and re-running the screenshot pipeline. Two minutes.

Where Claude actually earned his keep

The mechanical parts were fast. The fiddly parts were not.

I gave Claude my timestamps — “0:12 the snabbfakta overlay starts, 0:22 basics, 0:38 voice mode” — and asked him to update the ffmpeg filter chain. He came back with enable='between(t,22,37)' followed by enable='between(t,38,56)'. Run the build. One-second glitch in the cut where the avatar pops through between overlays.

I told him to fix it. He fixed it. Same mistake happened two scenes later. I told him to fix it and save the rule to memory so it doesn’t happen again. He did, with a feedback_overlay_timings.md file in his memory directory that now says:

When building ffmpeg overlay chains, the end time of one overlay must equal the start time of the next, exactly. Not minus one. Not plus a buffer. There’s no overlap risk —

between(t,X,Y)is inclusive and later overlays render on top.

Next render: clean. And in a future session, with a future video, he won’t make that mistake again. That’s the difference between an autocomplete and a coworker. Autocomplete doesn’t remember.

The same thing happened with the PiP placement — my face needs to sit in the bottom-right corner of every overlay except the outro, because the overlays are designed with a hole there. He missed that initially, I corrected him, he saved a rule. Now it’s a property of the series, not a thing I have to re-explain every Tuesday.

The fade I’m proud of

Last beat of the video: a ChatGPT-card has to transition into the outro endcard. First attempt, the avatar flashed full-screen for a frame between them — visible jarring. Claude diagnosed it by reading the filter graph: the ChatGPT overlay ended at second 208, the outro fade started at 208, so for the duration of the fade-in the outro was being composed over the bare avatar.

Fix: extend the ChatGPT overlay through the fade window, start the outro fade underneath it, and let the outro grow opaque over the ChatGPT card instead of over the avatar. The fade now lands clean. You can see exactly where the seam is — between 3:27 and 3:28 — and it looks deliberate.

What I notice in retrospect

The Swedish version cost me almost nothing in production friction. The expensive parts had already been paid for in the English version. Re-doing the language on top of a video used to be the project — sub agencies, voice talent, lip-replacement compositing. Today the language was the cheapest layer.

Which makes me wonder what else I’m leaving unlocalized just because the old cost structure made it feel impossible. The Healthwiki, sitting in Obsidian, is English. There’s no reason it has to be. The same dub-and-overlay pipeline that just made a Swedish ChatGPT video could, in principle, make a Swedish Healthwiki video tomorrow. Or a Spanish one. Or both.

I’m not going to do that this week. But it’s now a one-afternoon question, not a multi-month one. The barrier has moved from “is it feasible” to “is it worth it.” Which is, in my experience, the moment things actually start happening.

The Swedish version is up on YouTube under ChatGPT på 3 minuter — Svenska. The English version is the same script, different language tag. Same Stefan, in two languages he both speaks now.